-

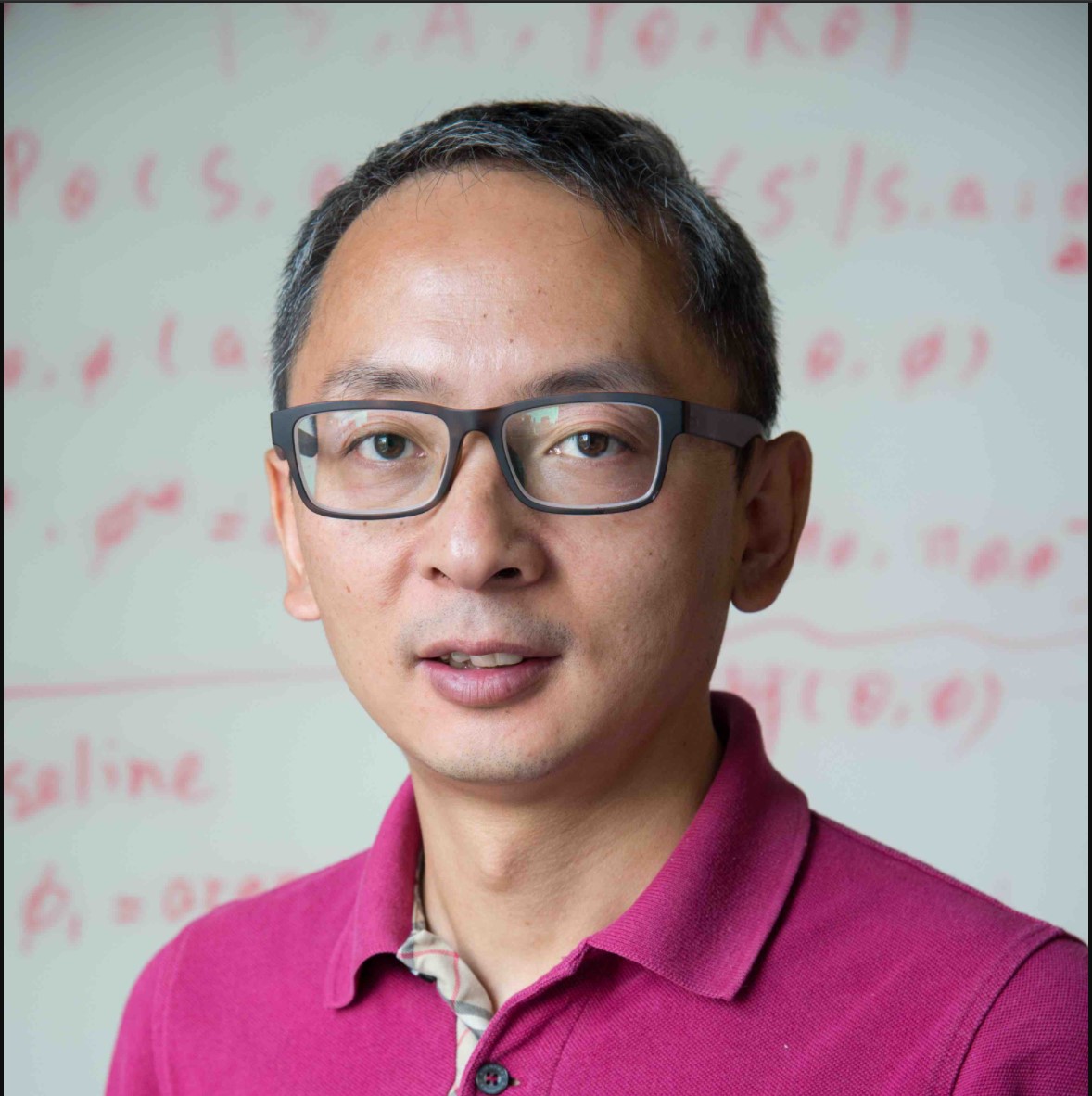

Marc Pollefeys

ETH Zurich

14:00-15:00 March 21, 2022

Computer vision for mixed reality and robotics

About

Marc Pollefeys is a Professor of Computer Science at ETH Zurich and the Director of the Microsoft Mixed Reality and AI Lab in Zurich where he works with a team of scientist and engineers to develop advanced perception capabilities for HoloLens and Mixed Reality. He was elected Fellow of the IEEE in 2012. He obtained his PhD from the KU Leuven in 1999 and was a professor at UNC Chapel Hill before joining ETH Zurich. He is best known for his work in 3D computer vision, having been the first to develop a software pipeline to automatically turn photographs into 3D models, but also works on robotics, graphics and machine learning problems. Other noteworthy projects he worked on are real‐time 3D scanning with mobile devices, a real‐time pipeline for 3D reconstruction of cities from vehicle mounted‐cameras, camera‐based self‐driving cars and the first fully autonomous vision‐based drone. Most recently his academic research has focused on combining 3D reconstruction with semantic scene understanding.

Georgia Gkioxari

Facebook AI Research

16:00-17:00 Dec 7, 2021

Understanding objects and scenes in 3D

2D visual recognition has seen unprecedented success, with the state of the art improving at every conference cycle. But as we develop sophisticated machines that recognize objects on the image plane and under challenging settings, we tend to ignore that the world is not planar and objects don't live in a 2D grid. On the other hand, understanding objects and scenes in 3D from 2D images is not straightforward. The challenges are great as real-world scenes are complex and contain many objects under conditions of occlusion and of varying appearances. In this talk, I will present some of our recent efforts toward the goal of 3D reasoning. To enable 3D understanding in diverse real-world scenes we pair advances from the 2D recognition literature and 3D learning. We bypass the need for 3D supervision by learning from multiple 2D views, e.g. from videos, and by leveraging 2D annotations with the help of differentiable rendering.

About

Georgia Gkioxari is a research scientist at Facebook AI Research (FAIR). She received a PhD in Computer Science and Electrical Engineering from the University of California at Berkeley under the supervision of Jitendra Malik in 2016. Her research interests lie in computer vision, with a focus on object and person recognition from static images and videos. In 2017, Georgia received the Marr Prize at ICCV for "Mask R-CNN". In 2021, she was awarded with the PAMI Young Researcher Award.

Chris Bregler

Google AI

15:00-16:00 Oct 28, 2021

Synthetic Media: New Opportunities and New Challenges

Recent AI breakthroughs in media creation techniques have opened up new possibilities for societally beneficial uses, but have also raised concerns about misuse. We can imagine translating a movie into any language in the world, and providing universal access to knowledge that was not possible before. This talk discusses recent trends in generative media creation tools for images, video, and sound, including new Movie Dubbing, Voice Cloning, Creative Photo Effects, DeepFakes for good and bad and, most importantly, CheapFakes. The latter include the most prevalent misinformation methods that are the hardest to detect automatically. We present efforts by Google and the community that are currently combating abuses, and we discuss long term solutions to the complex challenge of maintaining media integrity.

About

Chris Bregler is a Director and Principal Scientist at Google AI. He received an Academy Award in the Oscar’s Science and Technology category for his work in visual effects. His other awards include the IEEE Longuet-Higgins Prize for "Fundamental Contributions in Computer Vision that Have Withstood the Test of Time," the Olympus Prize, and grants from the National Science Foundation, Packard Foundation, Electronic Arts, Microsoft, U.S. Navy, U.S. Airforce, and other agencies. Formerly a professor at New York University and Stanford University, he was named Stanford Joyce Faculty Fellow, Terman Fellow, and Sloan Research Fellow. In addition to working for several companies including Hewlett Packard, Interval, Disney Feature Animation, LucasFilm's ILM, and the New York Times, he was the executive producer of squid-ball.com, for which he built the world's largest real-time motion capture volume. He received his M.S. and Ph.D. in Computer Science from U.C. Berkeley.

Jean-Francois Lalonde

Université Laval, Canada

14:00-15:00 Sept 13, 2021

Differentiable Compound Optics and Black-box Image Processing for End-to-end Camera Design

Most modern commodity imaging systems we use directly for photography---or indirectly rely on for downstream applications---employ optical systems of multiple lenses that must balance deviations from perfect optics, manufacturing constraints, tolerances, cost, and footprint. Although optical designs often have complex interactions with downstream image processing or analysis tasks, today's compound optics are designed in isolation from these interactions. Existing optical design tools aim to minimize optical aberrations, i.e., deviations from Gauss' linear model of optics, instead of application specific losses, precluding joint optimization with hardware image signal processing (ISP) and highly-parameterized neural network processing. This is complicated by the fact that configuration parameters of black-box ISPs often have complex interactions with the output image, and must be adjusted prior to deployment according to application-specific quality and performance metrics. Today, this search is commonly performed manually by "golden eye" experts or algorithm developers leveraging domain expertise, a process which is not compatible with end-to-end joint optimization. In this talk, I will present optimization methods for modeling compound optics as well as hardware ISPs that lift these limitations. We optimize entire lens systems jointly with hardware and software image processing pipelines, downstream neural network processing, and with application-specific end-to-end losses. To this end, we propose a learned, differentiable forward model for compound optics as well as for hardware ISPs, and an alternating proximal optimization method that handles function compositions with highly-varying parameter dimensions for optics, hardware ISP and neural nets. We assess our method across many camera system designs and end-to-end applications. We validate our approach in an automotive camera optics setting---together with hardware ISP post processing and detection---outperforming classical optics designs for automotive object detection and traffic light state detection. For human viewing tasks, we optimize optics and processing pipelines for dynamic outdoor scenarios and dynamic low-light imaging.We outperform existing compartmentalized design or fine-tuning methods qualitatively and quantitatively, across all domain-specific applications tested.

About

Jean-François Lalonde, Ph.D., is an Associate Professor in the Electrical and Computer Engineering Department at Université Laval since 2013. Previously, he was a Post-Doctoral Associate at Disney Research, Pittsburgh. He received a Ph.D. in Robotics from Carnegie Mellon University in 2011. His Ph.D. thesis won the CMU School of Computer Science Distinguished Dissertation Award. His research interests lie at the intersection of computer vision, computer graphics, and machine learning. In particular, he is interested in exploring how physics-based models and data-driven machine learning techniques can be unified to better understand, model, interpret, and recreate the richness of our visual world. To this end, his group has captured and published the largest datasets of indoor and outdoor high dynamic range wide-angle and omnidirectional images, freely available for research. He is actively involved in bringing research ideas to commercial products, as demonstrated by his patents and technology transfers with large companies such as Adobe and Facebook, and involvement as scientific advisor for high tech startups. More info at http://www.jflalonde.ca.

Iasonas Kokkinos

Snap Inc/UCL

16:00-17:00 June 3, 2021

Title: Humans, hands, and horses: 3D reconstruction of articulated object categories using strong, weak, and self-supervision.

Reconstructing 3D objects from a single 2D image is a task that humans perform effortlessly, yet computer vision so far has only robustly solved 3D face reconstruction. In this talk we will see how we can extend the scope of monocular 3D reconstruction to more challenging, articulated categories such as human bodies, hands and also animals such as birds, horses or cows. We will see that careful geometric modeling and optimization can deliver large rewards in particular as supervision becomes weaker and will demonstrate real-time, mobile phone-powered, Augmented Reality applications developed around the human body and hands. We will start from monocular 3D human pose estimation in-the-wild and describe HoloPose, a method that combines bottom-up, CNN-based methods for image understanding, with top-down parametric model fitting. We will then see how parametric model fitting can be used during training to supervise state-of-the-art feedforward CNNs and deliver state-of-the-art, real-time monocular hand mesh reconstruction. We will finally turn to self-supervised, non-rigid structure from motion-based approaches that allow us to reconstruct articulated object categories in 3D with hardly any supervision, allowing us to learn the parametric 3D deformation model in an end-to-end manner.

About

Iasonas Kokkinos is Research Manager in Snap and Associate Professor in the Department of Computer Science of University College London (UCL). Iasonas obtained his D.Eng in 2001 and PhD in 2006 from NTUA, was a postdoc in UCLA until 2008, and then joined the faculty of Ecole Centrale Paris where he stayed until 2016, prior to joining UCL. In 2016 he started working in industry as a research scientist with Facebook AI Research and then in 2018 he co-founded and served as CEO of Ariel AI, focusing on monocular human reconstruction for augmented reality; in 2020 he joined Snap following the acquisition of Ariel AI. His research interests are at the intersection of computer vision and deep learning, aiming at the development of models that unify problems of structured prediction and 3D shape modeling with deep learning, as well as multi-task learning. He publishes, reviews, and frequently serves as Area Chair in the major computer vision conferences (CVPR,ICCV,ECCV).

Yaser Sheikh

Facebook Reality Lab/CMU

15:00-16:00 June 2, 2021

Photorealistic telepresence

Telepresence has the potential to bring billions of people into artificial reality (AR/MR/VR). It is the next step in the evolution of telecommunication, from telegraphy to telephony to videoconferencing. In this talk, I will describe early steps taken at FRL Pittsburgh towards achieving photorealistic telepresence: realtime social interactions in AR/VR with avatars that look like you, move like you, and sound like you. If successful, photorealistic telepresence will introduce pressure for the concurrent development of the next generation of algorithms and computing platforms for computer vision and computer graphics. In particular, I will introduce codec avatars: the use of neural networks to unify the computer vision (inference) and computer graphics (rendering) problems in signal transmission and reception. The creation of codec avatars require capture systems of unprecedented 3D sensing resolution, which I will also describe.

About

Yaser Sheikh directs the Facebook Reality Lab in Pittsburgh, devoted to achieving photorealistic social interactions in augmented reality (AR) and virtual reality (VR). He is an associate professor (on leave) at the Robotics Institute, Carnegie Mellon University, where he directed the Perceptual Computing Lab, producing OpenPose and the Panoptic Studio. His research broadly focuses on machine perception and rendering of social behavior, spanning sub-disciplines in computer vision, computer graphics, and machine learning. He has served on committees He is an associate editor for the IEEE Transactions on Pattern Analysis and Machine Intelligence (PAMI) and has served as a senior program committee member for SIGGRAPH, CVPR, and ICCV. His research has been featured by various news and media outlets including The New York Times, BBC, CBS, WIRED, and The Verge. With colleagues and students, he has won the Hillman Fellowship (2004), Honda Initiation Award (2010), Popular Science’s "Best of What’s New" Award (2014), as well as several conference best paper and demo awards (CVPR, ECCV, WACV).